How We Cut Our LLM Costs 60% With Request Routing

A practical breakdown of how intelligent routing, caching, and model selection through an LLM gateway can dramatically reduce your AI infrastructure costs.

Latest news and updates from LLM Gateway

A practical breakdown of how intelligent routing, caching, and model selection through an LLM gateway can dramatically reduce your AI infrastructure costs.

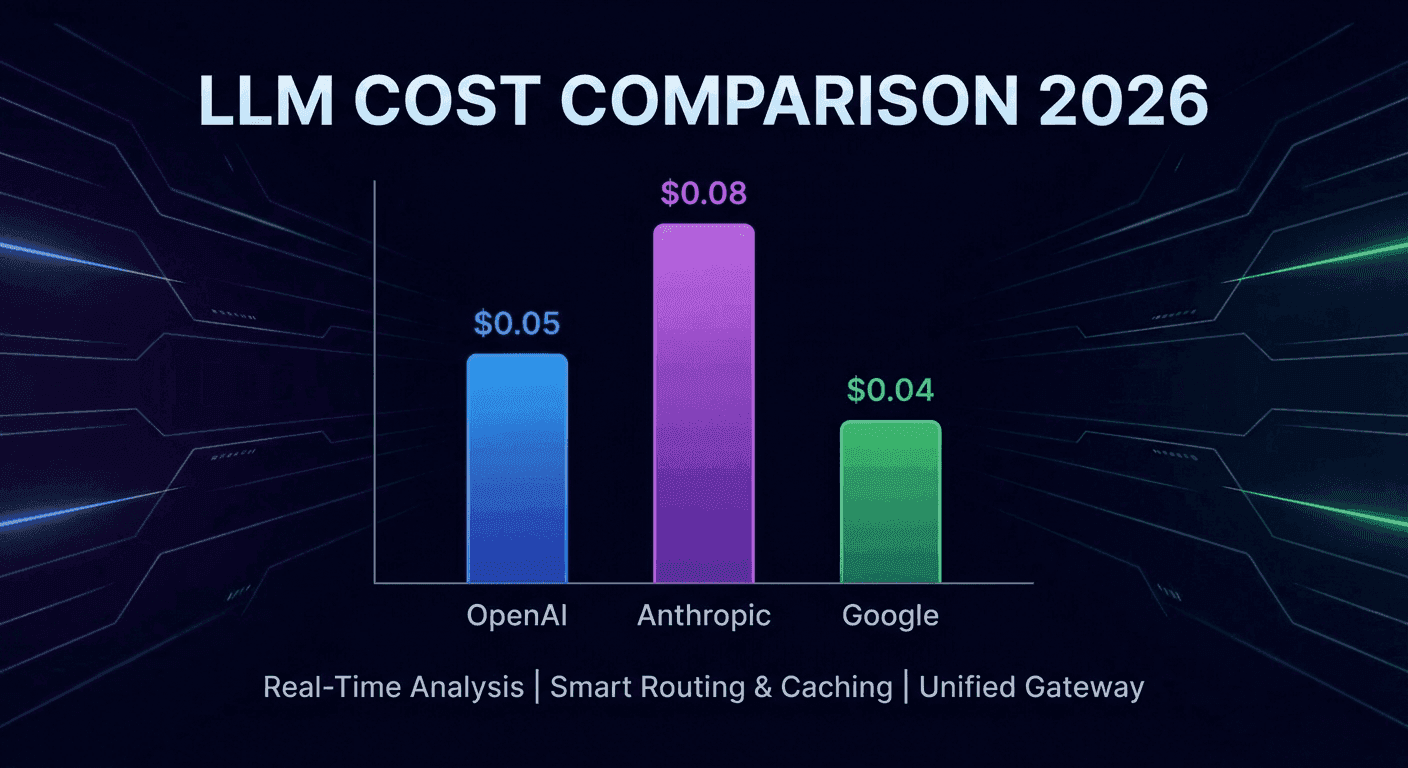

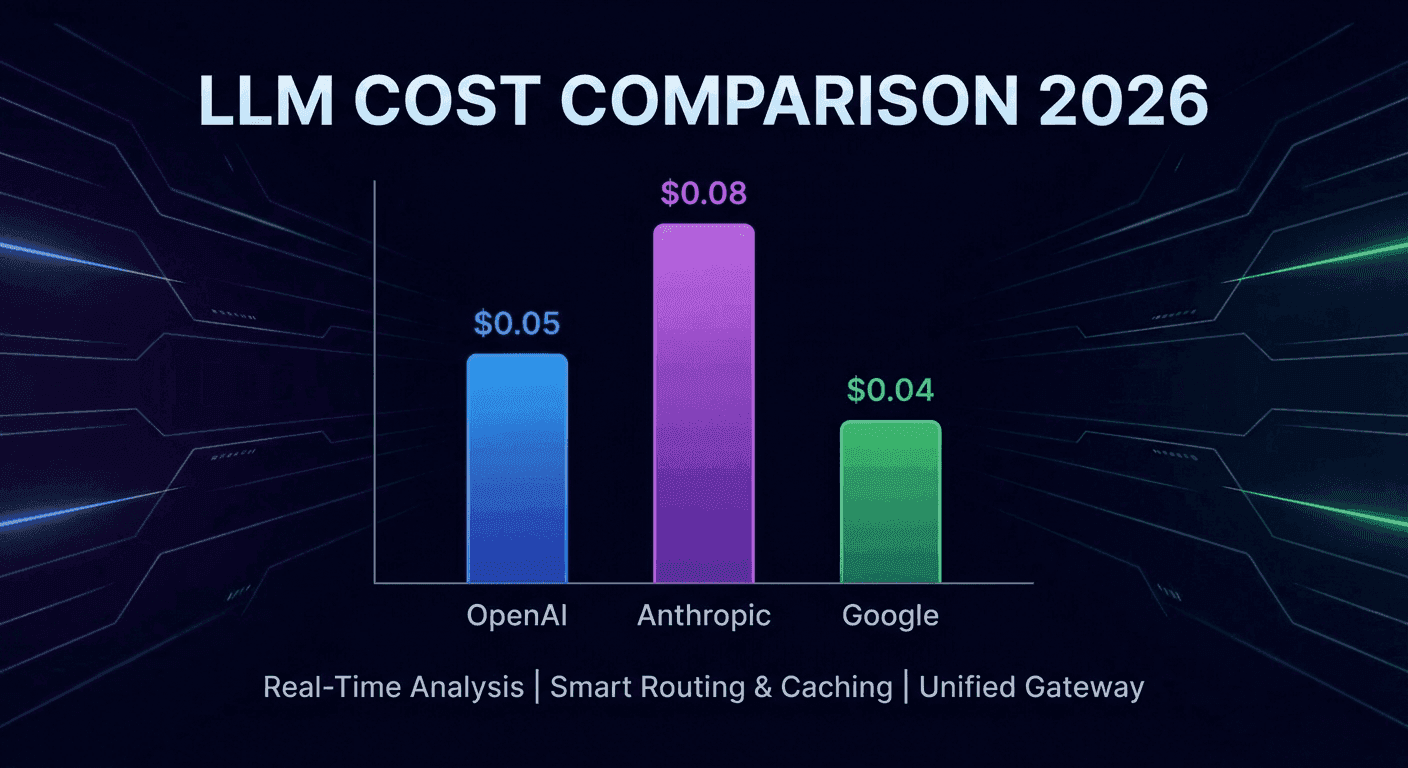

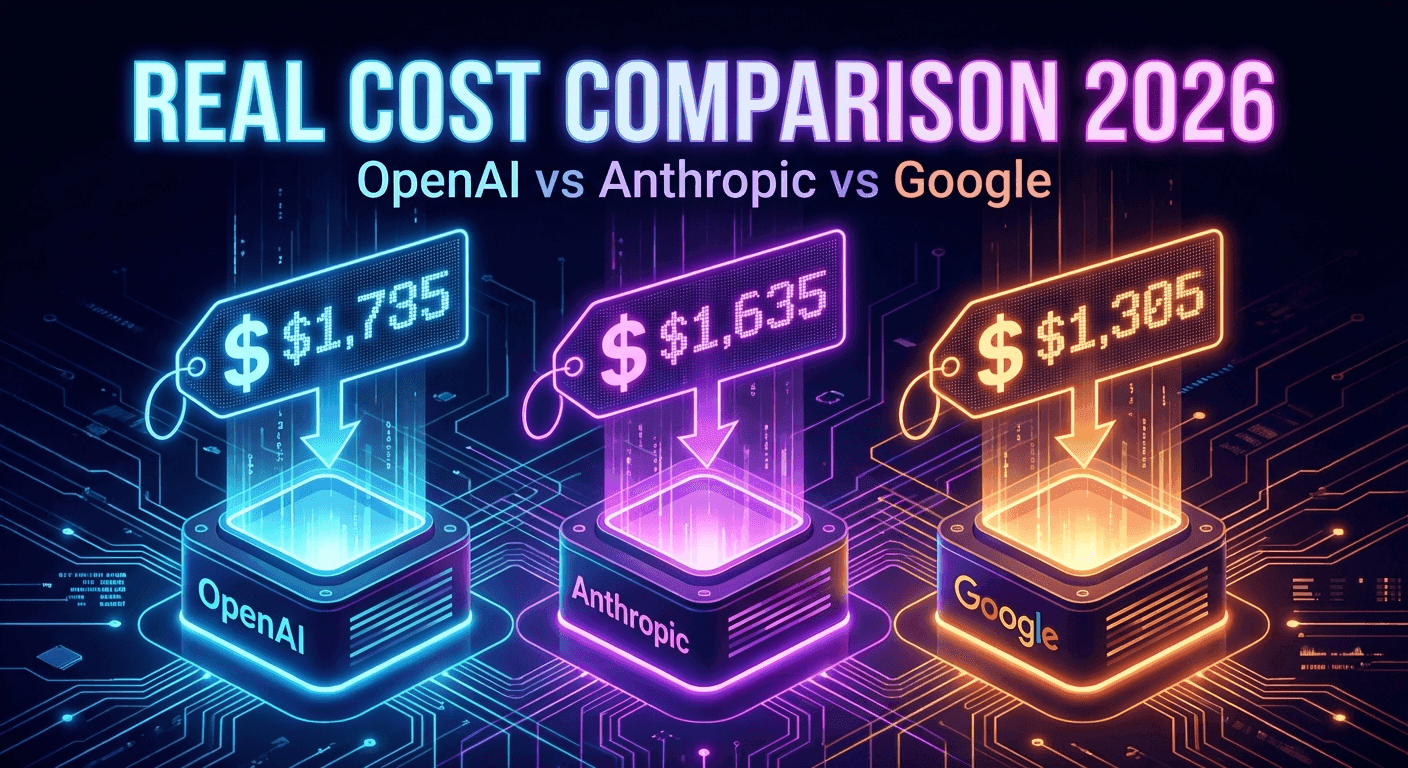

Side-by-side pricing comparison of GPT-5, Claude Opus 4.6, and Gemini 2.5 Pro with real cost calculations for production workloads.

LLM Gateway partners with Alibaba Cloud to bring you 20% off 26 Qwen AI models — including Qwen3 Max, Qwen3 Coder, QwQ reasoning, vision-language, and image generation models.

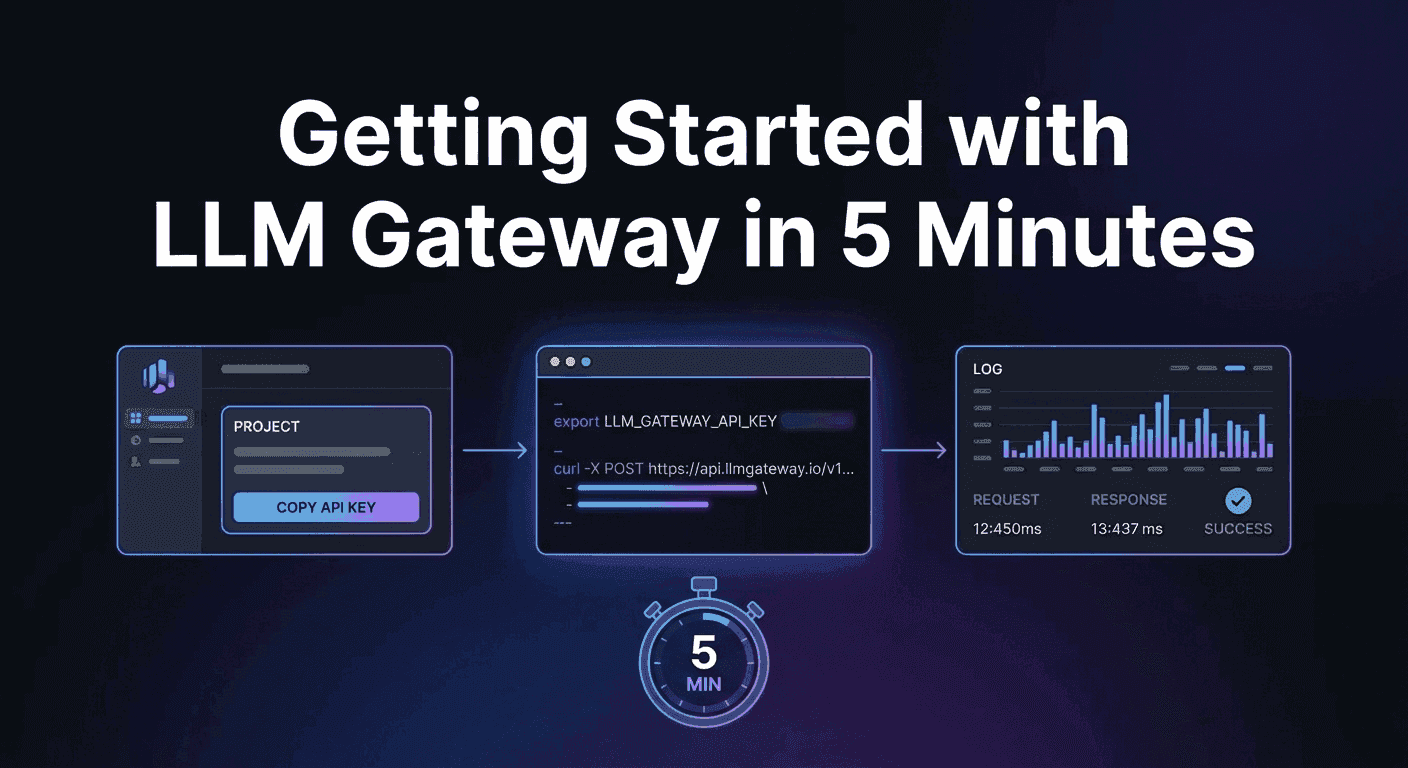

A step-by-step guide to making your first LLM API request through LLM Gateway — from signup to seeing results in your dashboard.

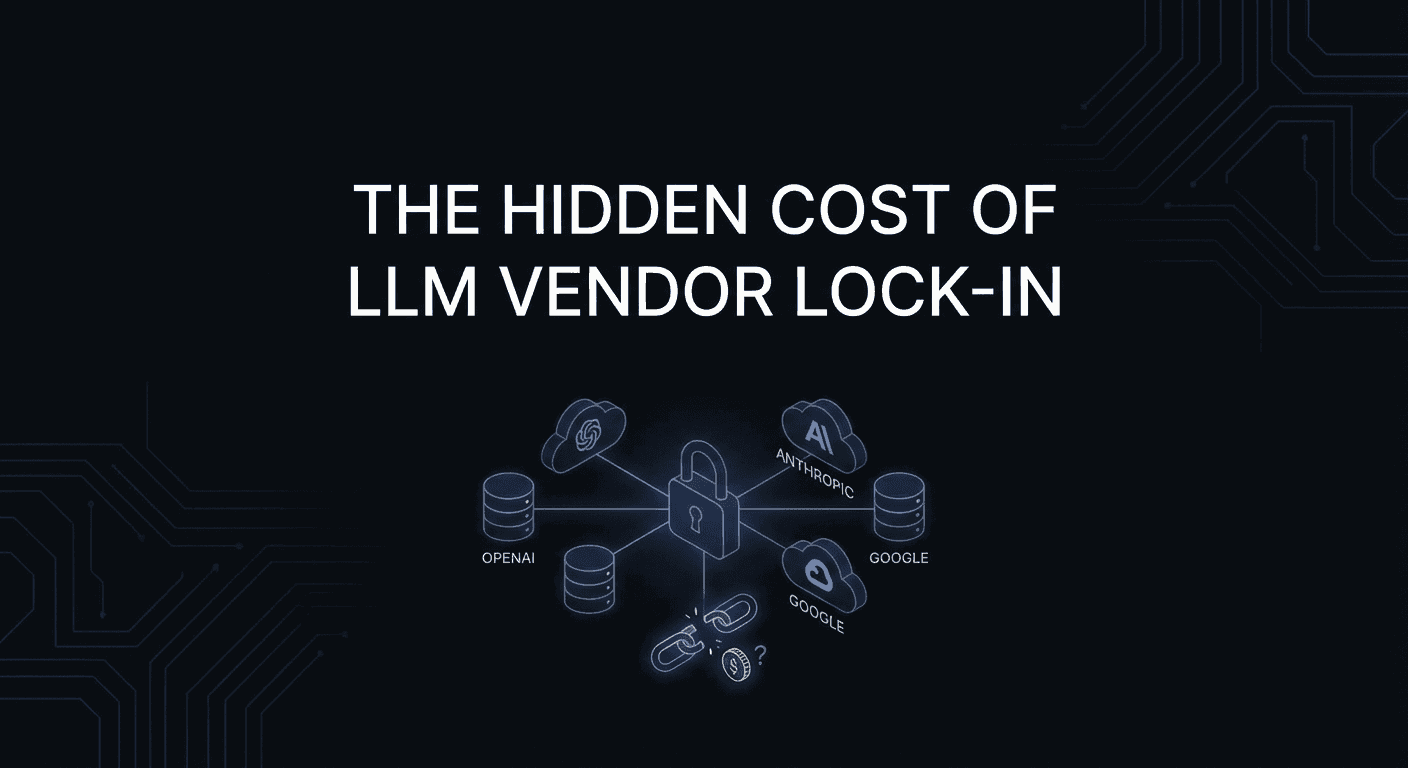

Why building directly against a single LLM provider's API is riskier than you think, and how a gateway layer protects your AI investment.

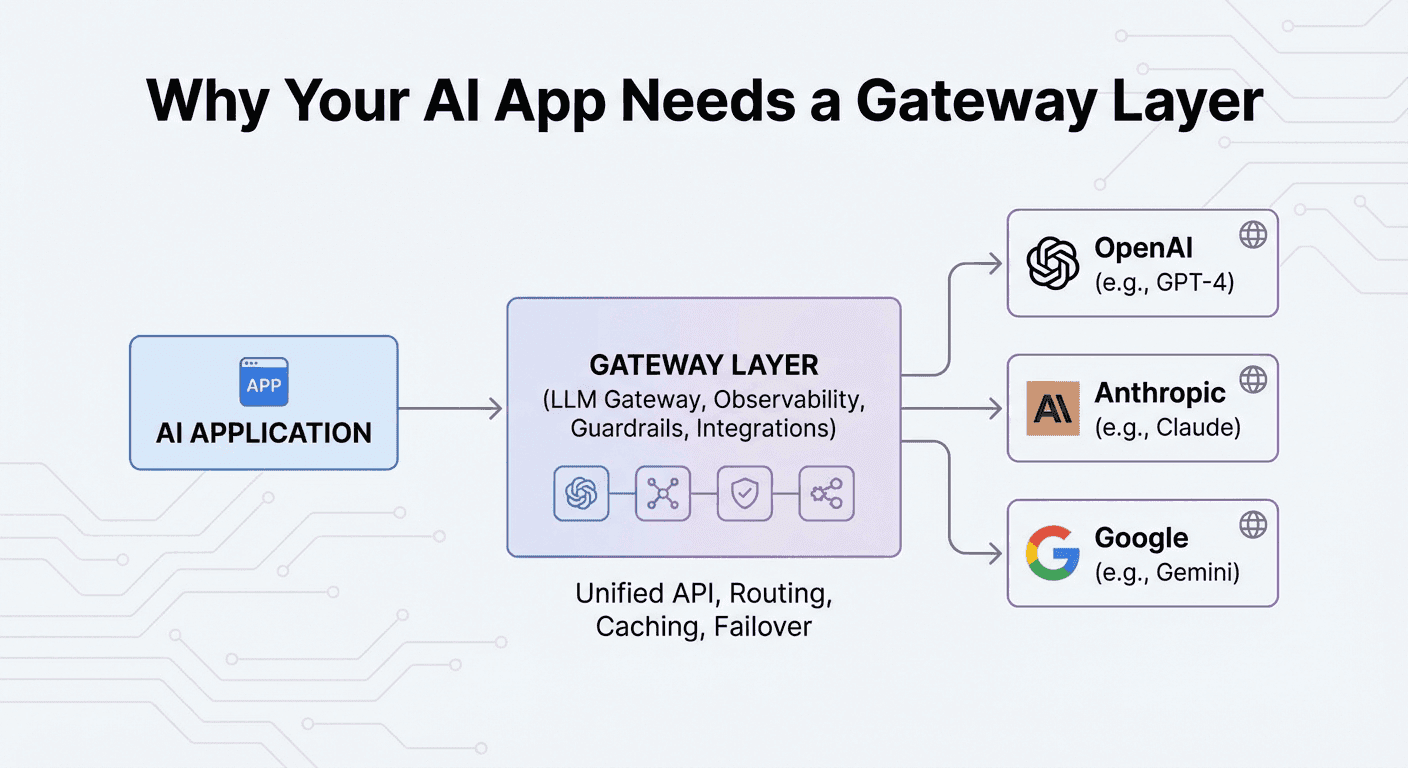

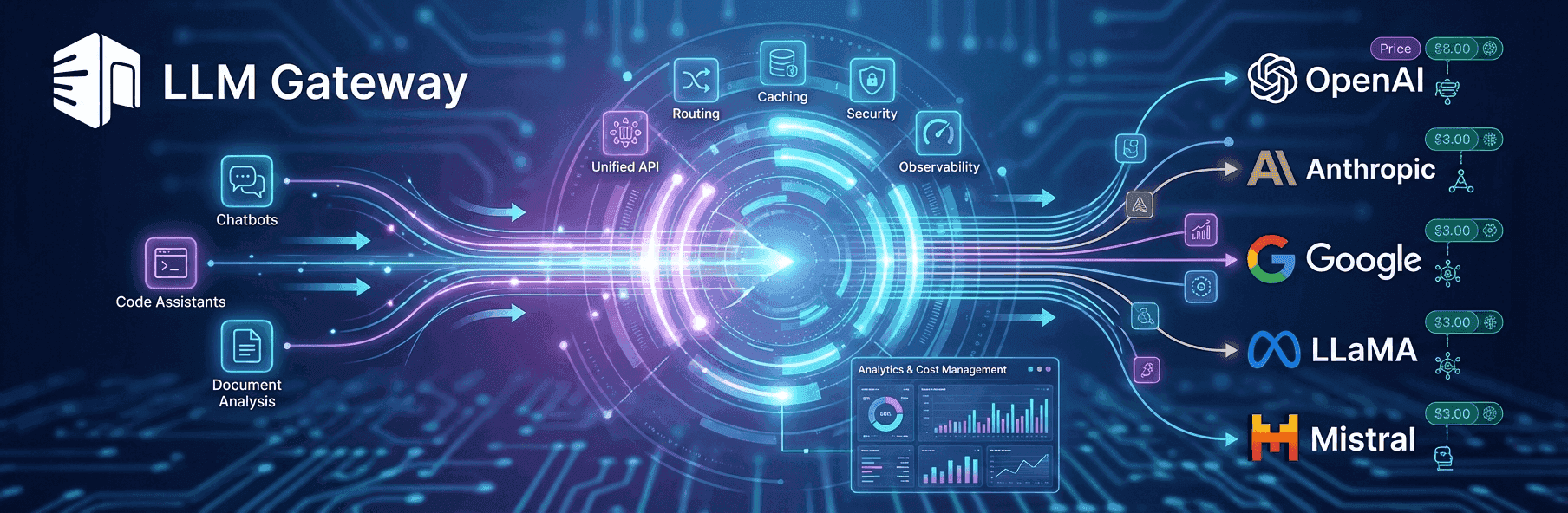

What an LLM gateway does, why it matters, and how it lets you ship AI features faster by abstracting away provider complexity.

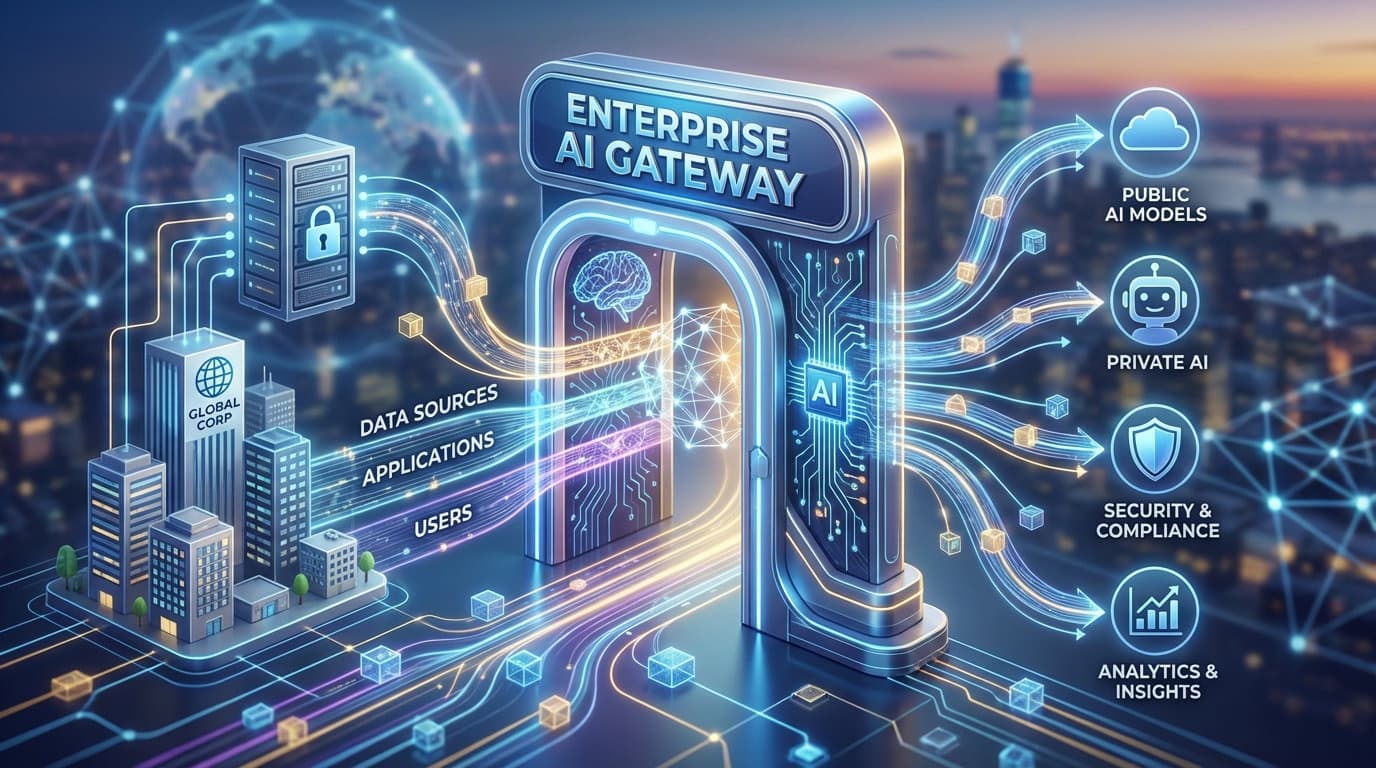

Learn why simple LLM proxies aren't enough and how a unified AI gateway delivers centralized access control, cost visibility, compliance, and security.

Learn what an LLM Gateway is, why you need one, and how it simplifies integrating, managing, and deploying large language models in production.

How we deploy our Next.js apps on Google Cloud Platform without relying on Vercel.

Three months of updates: 15+ new models, team management, referral program, tiered pricing, data retention, new providers, and much more.

Use the CYBERMONDAY promo code to get 50% off credits and the Pro plan for a limited time.

Use the BLACKFRIDAY promo code to get 50% off credits and the Pro plan for a limited time.

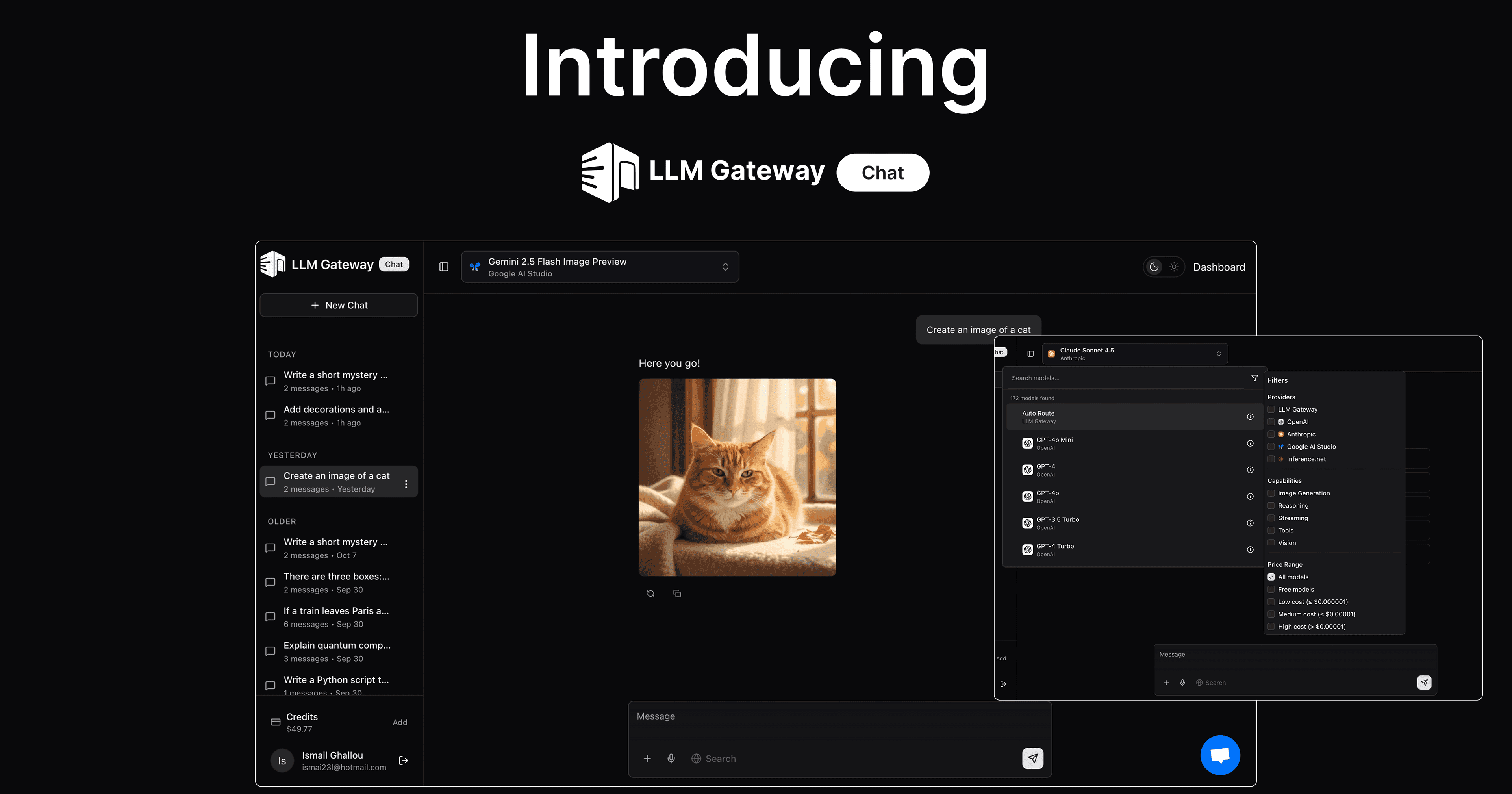

Compare GPT-5, Claude, Gemini, and 180+ other models side by side. Generate images, test prompts, and find the best model for your use case.

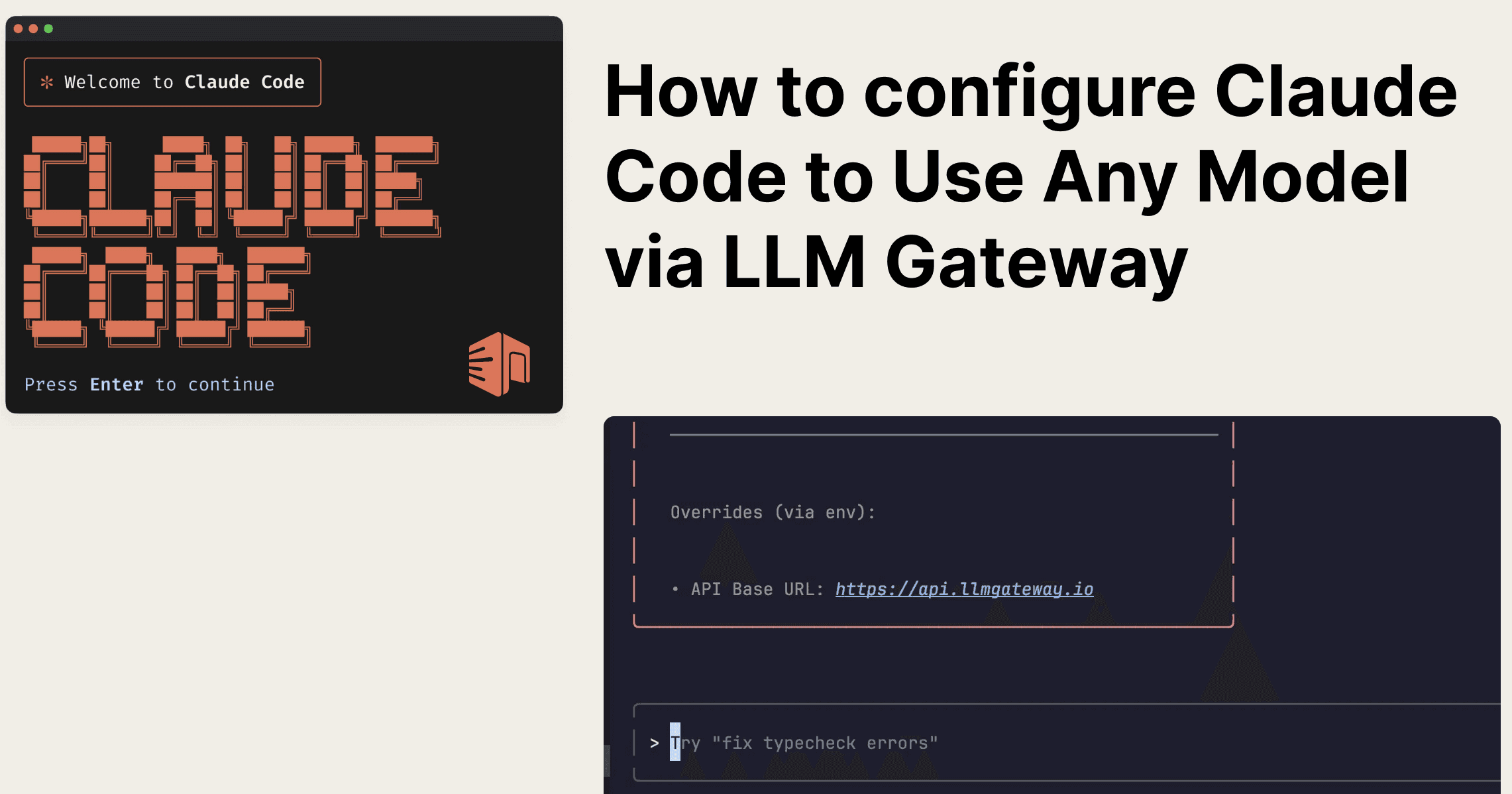

Use GPT-5, Gemini, or any model with Claude Code. Three environment variables, zero code changes.

Connect your internal LLM deployments or any OpenAI-compatible API to LLM Gateway—and get the same analytics, caching, and routing.

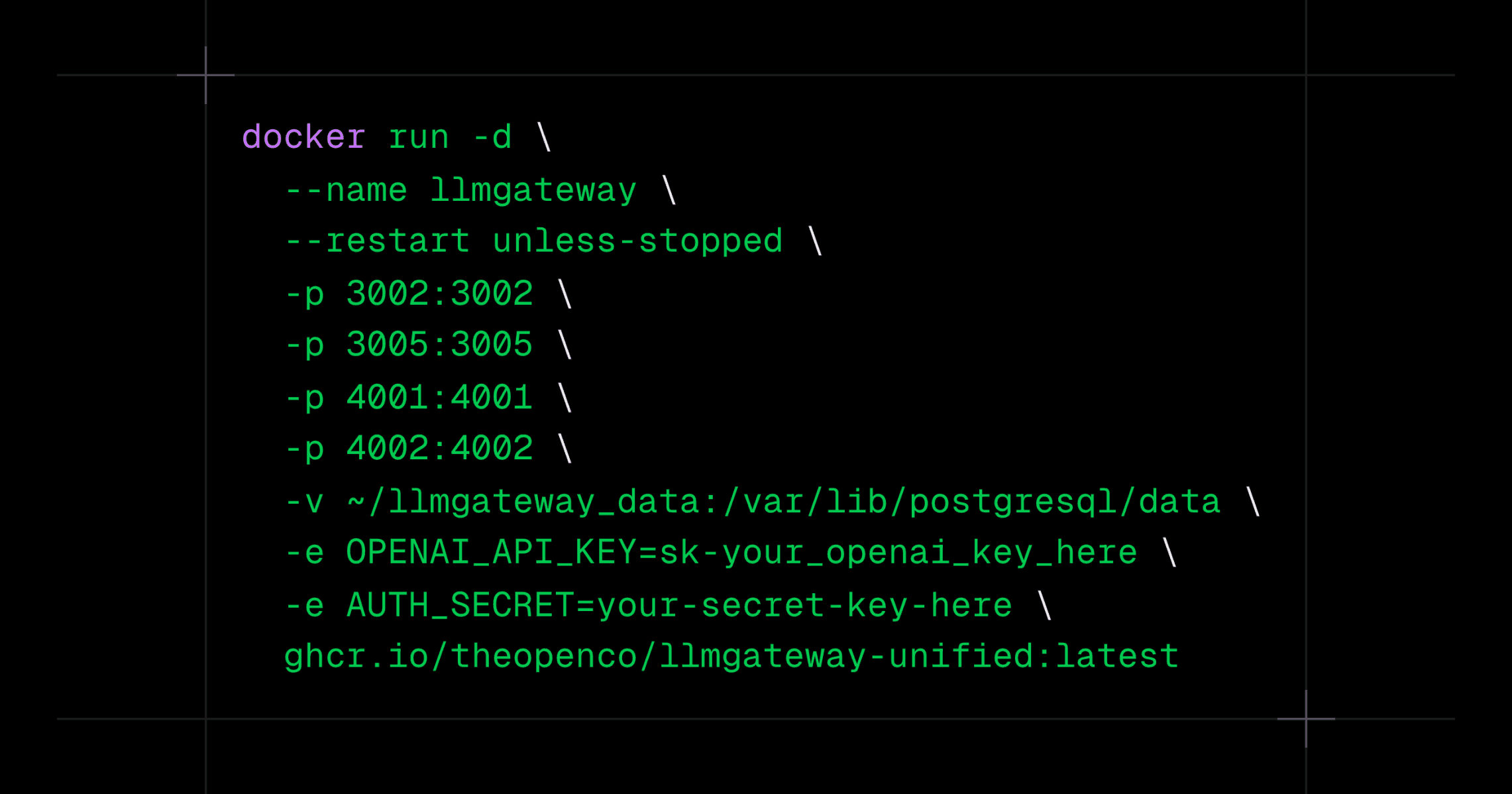

Run LLM Gateway on your own infrastructure in under 5 minutes. Full control, zero platform fees.

One API for 180+ models across 60+ providers. Route requests, track costs, and switch models without changing your code.